this

precedent?

Senator

Chuck

Grassley,

who

is

a

mere

five

years

younger

than

sliced

bread,

has

taken

it

upon

himself

to

delve

into

the

high-tech

world

of

artificial

intelligence

hallucinations

after

a

pair

of

judges

withdrew

opinions

upon

discovery

of

a

few

minor

issues

like

“quotes

that

don’t

exist”

and

“defendants

who

aren’t

actually

defendants.”

The

Supreme

Court

hallucinating

an

individual

right

from

the

history

and

text

of

the

Second

Amendment

shall

remain

blissfully

unexamined.

If

only

the

judges

had

claimed

their

propositions

were

“deeply

rooted

in

the Nation’s

history

and

tradition,”

they

might

be

spared

the

indignity

of

having

to

reply

to

a

letter

from

the

chair

of

the

Judiciary

Committee.

Over

the

summer,

two

federal

judges

—

Judge

Julien

Neals

of

New

Jersey

and

Judge

Henry

Wingate

of

Mississippi

—

issued

orders

that

showed

all

the

hallmarks

of

AI-hallucinated

citations.

In

the

Judge

Neals

case,

the

order

included

inaccurate

factual

references,

quotes

that

don’t

appear

in

the

cited

cases,

and

the

misattribution

of

a

case

to

the

wrong

jurisdiction.

Judge

Wingate’s

order

also

botched

facts

and

misquoted

the

law,

but

included

the

added

dimension

of

referencing

parties

and

witnesses

who

aren’t

involved

in

the

case

at

all.

“No

less

than

the

attorneys

who

appear

before

them,

judges

must

be

held

to

the

highest

standards

of

integrity,

candor,

and

factual

accuracy,”

Grassley

wrote

both

judges.

“Indeed,

Article

III

judges

should

be

held

to

a

higher

standard,

given

the

binding

force

of

their

rulings

on

the

rights

and

obligations

of

litigants

before

them.”

Grassley

is

doing

a

little

grandstanding

here,

trying

to

stir

up

some

excitement

over

public

AI

anxiety

while

the

government

shuts

down

and

his

constituents

wonder

why

the

administration

has

destroyed

Iowa’s

agricultural

exports

while

bailing

out

Argentina

so

they

can

undercut

the

market.

That

was

a

concern

for

the

oft-tweeting

nonagenarian

a

few

weeks

ago,

but

since

then

the

Trump

administration

has

more

or

less

shrugged

at

the

prospect

of

protecting

American

farmers

and

Grassley

dutifully

transitioned

to

a

“golly

gee,

I’m

sure

Glorious

Leader

Trump

will

think

of

something”

while

Iowa’s

economy

flounders.

But,

hey,

his

lack

of

focus

is

our

gain!

If

he

manages

to

receive

answers

to

his

AI

queries,

we

could

learn

a

few

things

about

how

federal

judges

are

approaching

the

technology:

Did

you,

your

law

clerks,

or

any

court

staff

use

any

generative

AI

or

automated

drafting/research

tool

in

preparing

any

version

of

the

[filings

at

issue]?

If

so,

please

identify

each

tool,

its

version

(if

known),

and

precisely

how

it

was

used.

What’s

the

brightline

for

“automated

drafting/research

tool?”

Writing

an

opinion

by

uploading

a

file

and

asking

ChatGPT

Jesus

to

take

the

wheel

would

be

reckless,

but

there’s

a

wide

range

of

AI

usage

that

falls

short

of

that.

Are

we

going

to

start

nitpicking

judges

for

using

Word

with

CoPilot

enabled?

What

if

they

have

an

industry-specific

tool

like

BriefCatch?

Are

we

second-guessing

Westlaw’s

CoCounsel?

Does

Grammarly

count?

While

academically

interesting,

an

honest

answer

to

this

question

isn’t

going

to

provide

much

insight

into

best

practices,

and

might

smear

perfectly

good

tools

along

the

way.

The

only

question

that

matters

is,

“hey,

how

did

this

fabricated

nonsense

get

in

there?”

Everything

else

is

a

distraction.

Did

you,

your

law

clerks,

or

any

court

staff

at

any

time

enter

sealed,

privileged,

confidential,

or

otherwise

non-public

case

information

into

any

generative

AI

or

automated

drafting/research

tool

in

preparing

any

version

of

the

[filings]?

This

veers

even

further

from

oversight

into

theater.

Neither

of

these

fiascos

involved

any

confidential

information.

These

were

all

decided

on

publicly

docketed

material.

If

anything,

the

filings

had

the

opposite

problem:

they

made

up

stuff

that

wasn’t

in

the

record.

Loading

confidential

material

into

consumer

AI

remains

a

huge

concern

for

practitioners,

but

it’s

not

the

issue

in

these

cases.

Please

describe

the

human

drafting

and

review

performed

in

preparing

the

Court’s

July

20,

2025

Order

before

issuance—by

you,

chambers

staff,

and

court

staff—including

cite-checking,

verification

of

quoted

statutory

text,

party

identification,

and

validation

that

every

cited

case

exists

and

stands

for

the

proposition

stated.

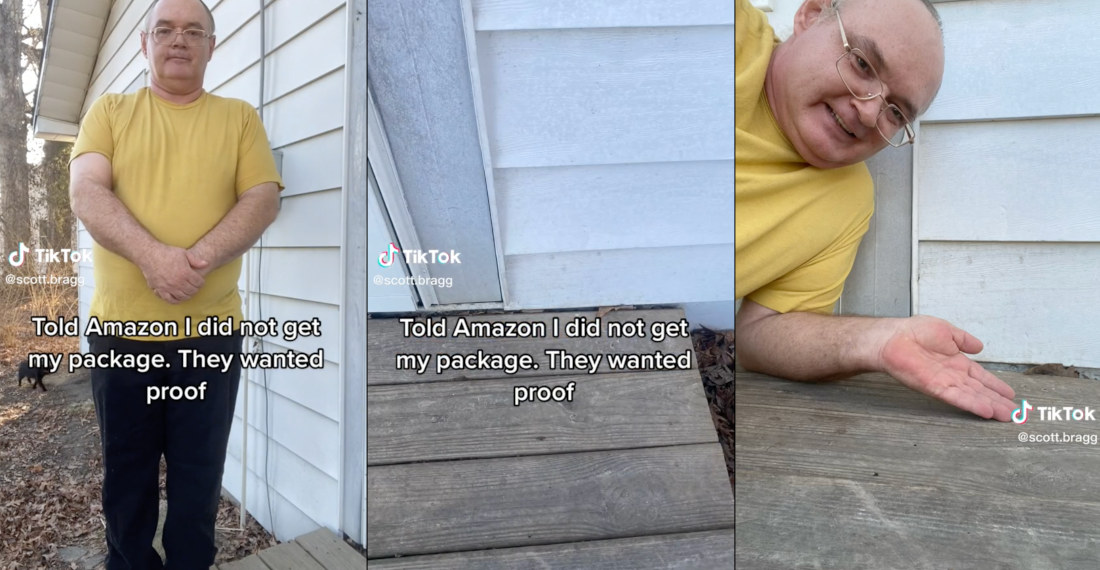

This

is

the

legislative

inquiry

equivalent

of

the

Amazon

delivery

meme:

If

the

process

involved

actual

checking,

this

doesn’t

happen.

For

each

misstatement

identified

in

the

defendants’

unopposed

motion

to

clarify/correct—whether

references

to

non-party

plaintiffs

and

defendants,

incorrect

statutory

quotations,

and

declarations

of

individuals

who

do

not

appear

in

this

record—please

explain

the

cause

of

the

error

and

what

internal

review

processes

failed

to

identify

and

correct

each

error

before

issuance.

There

it

is!

This

question!

This

should

be

the

first

question.

Please

explain

how

the

Court

differentiates

between

what

it

characterizes

as

“clerical”

mistakes

in

its

[filing],

and

non-existent

citations

filed

by

an

attorney

in

an

active

case

before

you

for

which

the

Court

required

the

attorney

to

submit

a

sworn

affidavit

explaining

the

errors

and

outlining

remedial

measures

to

prevent

recurrence.

Yeah,

this

wasn’t

a

clerical

mistake

except

in

the

most

literal

sense

that

it

was

probably

caused

by

a

clerk.

No

one

made

a

typo,

they

included

outright

fake

stuff.

That’s

more

than

clerical.

Attempting

to

pawn

it

off

as

clerical

suggests

a

lack

of

candor

from

the

judges,

which

is

as

troubling

as

it

was

unnecessary.

Just

own

up

to

the

mistake!

Use

it

as

a

teachable

moment!

The

whole

country

is

screwing

around

with

this

technology

and

making

mistakes…

this

is

an

opportunity

to

caution

the

legal

industry.

Please

explain

why

the

Court’s

original

[filing]

was

removed

from

the

public

record

and

whether

you

will

re-docket

the

order

to

preserve

a

transparent

history

of

the

Court’s

actions

in

this

matter.

Because

it

was…

wrong?

I’m

thinking

that’s

why

they

took

it

off

the

docket,

Chuck.

Did

AI

draft

this

question?

Please

explain

why

the

Court’s

corrected

[filing]

omits

any

reference

to

the

withdrawn

[filing],

excludes

that

decision

from

any

discussion

of

procedural

history,

and

does

not

include

a

“CORRECTED”

notation

at

the

top

of

the

document

to

indicate

that

the

decision

was

substantively

altered.

A

slightly

better

question

than

before,

but

still

unnecessary.

We

need

to

be

less

concerned

about

how

the

final

record

of

the

case

appears,

and

more

focused

on

“what

went

wrong

and

how

to

avoid

it

going

forward.”

Please

detail

all

corrective

measures

you

have

implemented

in

your

chambers

since

July

20,

2025

to

prevent

recurrence

of

substantive

citation

and

quotation

errors

in

future

opinions

and

orders,

including

proper

record

preservation.

An

important

question,

but

also

an

invitation

to

hurl

babies

out

with

the

bath

water.

When

AI

hallucinations

struck

Butler

Snow,

they

started

purging

the

site

of

AI

discussion,

a

regrettable

move

since

the

material

on

their

site

provided

exactly

the

sort

of

advice

that

could’ve

kept

them

out

of

trouble.

Everyone

should

make

building

out

“standard

operating

procedures”

and

“best

practices”

for

AI

usage

a

top

priority,

but

the

tone

of

this

question

is

just

going

to

prompt

judges

to

reject

AI

out

of

hand.

Imagine

failing

to

double

check

a

summer

associate’s

work

and

being

called

to

“detail

all

corrective

measures

you

have

implemented.”

Artificial

intelligence

tools

are

basically

very

dumb,

but

also

very

fast

summer

associates.

Take

the

work

product,

remember

to

thoroughly

check

it,

and

you’ll

be

fine.

We

don’t

need

to

turn

it

into

a

Capitol

Hill

inquiry.

Unless

someone

is

dumb

enough

to

try

to

let

AI

decide

the

legal

issue

instead

of

just

write

it

up.

But

no

one

is

actually

that

stupid,

right?

The

judges

have

until

October

13

to

respond,

which

is

nice

because

it

allows

them

to

get

an

answer

in

before

the

judiciary

runs

out

of

money.

Or

maybe

the

judges

will

follow

Chief

Justice

John

Roberts’s

lead

and

inform

Grassley

that

the

separation

of

powers

requires

them

to

give

the

senator

the

finger.

What

we,

as

the

public,

actually

need

is

an

explanation

from

the

judges

so

the

rest

of

the

judiciary

can

avoid

making

the

same

mistakes.

And

that’s

pretty

much

it.

The

fault

isn’t

in

using

AI,

it’s

in

the

humans

getting

lazy

with

their

checking.

(Read

the

letters

on

the

next

page…)

Joe

Joe

Patrice is

a

senior

editor

at

Above

the

Law

and

co-host

of

Thinking

Like

A

Lawyer.

Feel

free

to email

any

tips,

questions,

or

comments.

Follow

him

on Twitter or

Bluesky

if

you’re

interested

in

law,

politics,

and

a

healthy

dose

of

college

sports

news.

Joe

also

serves

as

a

Managing

Director

at

RPN

Executive

Search.