There

is

a

certain

dark

comedy

in

watching

the

law

firm

that

advises

OpenAI

on

its

“safe

and

ethical

deployment”

of

artificial

intelligence

rush

to

the

federal

bankruptcy

docket

seeking

leniency

after

realizing

they’ve

filed

a

lengthy

brief

riddled

with

AI

hallucinations.

It

doesn’t

matter

where

on

the

Am

Law

100

food

chain

you

are,

the

AI

psychedelic

experience

can

come

for

anyone

who

lets

their

editorial

standards

slip.

In

a

letter

dated

Saturday,

Sullivan

&

Cromwell

partner

Andrew

Dietderich

wrote

Chief

Bankruptcy

Judge

Martin

Glenn

of

the

Southern

District

of

New

York

a

letter

that

will

live

forever

in

the

Biglaw

Hall

of

Hilarity.

Dietderich

informed

the

court

that

he

had

learned

on

Thursday

that

the

firm’s

emergency

motion

in

the

Chapter

15

case

of

Prince

Global

Holdings

—

the

BVI-incorporated

husk

of

a

Cambodian

forced-labor

scam

conglomerate

—

had

gotten

high

on

its

own

supply

of

AI

tools.

The

inaccuracies

and

errors

in

the

Motion

include

artificial

intelligence

(“AI”)

“hallucinations.”

“Hallucinations”

are

instances

in

which

artificial

intelligence

tools

fabricate

case

citations,

misquote

authorities,

or

generate

non-existent

legal

sources.

We

deeply

regret

that

this

has

occurred.

The

Firm

maintains

comprehensive

policies

and

training

requirements

governing

the

use

of

AI

tools

in

legal

work.

These

safeguards

are

designed

to

prevent

exactly

this

situation.

The

Firm’s

policies

on

the

use

of

AI

were

not

followed

in

connection

with

the

preparation

of

the

Motion.

In

addition,

the

Firm

has

general

policies

and

training

requirements

for

the

proper

review

of

legal

citations.

Regrettably,

this

review

process

did

not

identify

the

inaccurate

citations

generated

by

AI,

nor

did

it

identify

other

errors

that

appear

to

have

resulted

in

whole

or

in

part

from

manual

error.

We

hear

a

lot

about

the

“safeguards”

that

lawyers

put

up

around

AI,

but

at

the

end

of

the

day

it

feels

like

empty

PR

talk

for

“we

like

to

think

we’re

editing

what

we

send

out

the

door.”

Which,

in

fairness,

is

the

ultimate

safeguard.

There

are

great

tools

out

there

designed

to

reduce

the

risk

of

a

pernicious

hallucination.

And

these

tools

will

give

lawyers

a

leg

up

when

it

comes

to

tamping

down

errors

early.

But

there’s

no

substitute

for

a

junior

having

to

print

up

the

cases

and

do

the

meticulous

checking…

and

then

the

midlevel

doing

the

exact

same

thing.

That’s

the

manual

review

process

the

letter

references.

It’s

inefficient,

but

perfection

isn’t

intended

to

be

cheap

and

easy.

That

is,

in

fact,

why

someone

hires

Sullivan

&

Cromwell

in

the

first

place.

Dietderich

goes

on

to

explain

at

some

length

—

the

firm

has

a

whole

program.

Two

required

training

modules.

Tracked

completions.

Office

Manual

language

instructing

lawyers

to

“trust

nothing

and

verify

everything.”

Policies!

Mandatory

training!

Verification

requirements!

And

yet.

This

is

what

we’re

talking

about

when

we

say

AI

is

in

the

process

of

making

lawyers

dumber.

In

the

earliest

days

of

AI,

it

was

easy

to

put

all

the

blame

on

the

human

lawyers

failing

to

maintain

best

editing

practices.

But

as

the

technology

advances

and

AI

providers

boast

that

they’ve

automated

more

and

more

steps

in

the

process,

the

human

can

enter

the

workflow

later

in

the

game

and

that

can

subconsciously

undermine

the

editing

approach.

We

don’t

know

how

automated

the

S&C

workflow

is,

but

it’s

all

a

continuum

—

once

AI

joins

the

workflow,

the

clock

starts

ticking

on

someone

looking

at

fully

artificially

generated

work

product

and

taking

a

slightly

lighter

red

pen

to

the

output.

Before

long,

that’s

going

to

miss

something.

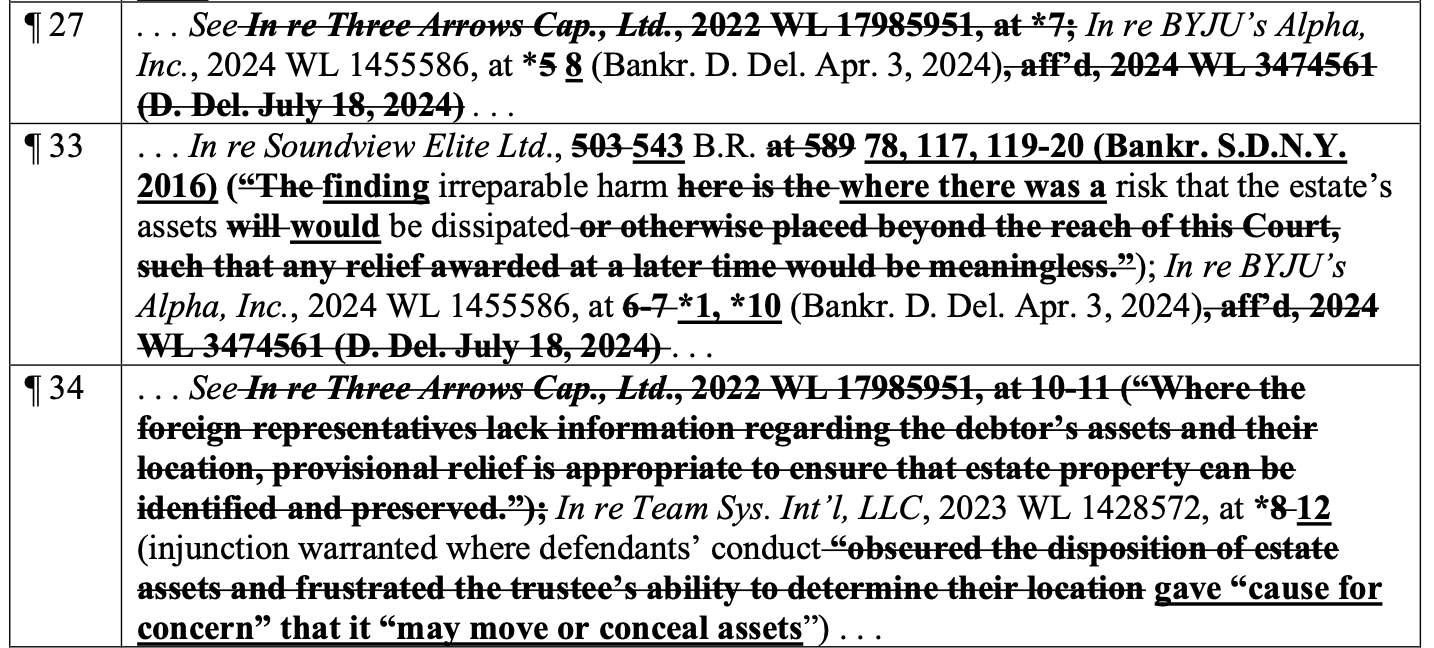

Schedule

A

to

the

letter

catalogs

the

damage

across

the

motion,

the

verified

petition,

the

joint

administration

motion,

the

scheduling

motion,

and

a

couple

of

declarations

—

roughly

40

corrections.

Some

substantive,

some

less

so,

all

embarrassing.

The

fixes

include

wrong

pin

cites,

wrong

volume

numbers,

parenthetical

quotes

that

just…

aren’t

in

the

cases.

Build

all

the

AI

policies

you

want,

but

there

is

no

substitute

for

having

a

human

—

preferably

multiple

humans

—

print

everything

out,

take

a

ruler

and

a

red

pen,

and

go

line

by

line

cross-checking

everything.

It’s

tedious

work

for

the

lawyers

and

expensive

work

for

the

clients,

but

it’s

better

than

having

to

write…

this

letter.

This

is

not

a

Sullivan

&

Cromwell

problem.

This

is

a

profession

problem.

We

have

been

covering

AI

hallucination

sanctions

cases

so

relentlessly

that

Damien

Charlotin

has

now

catalogued

over

a

thousand

of

them.

Tools

like

BriefCatch’s

RealityCheck

exist

specifically

because

firms

can’t

be

trusted

to

run

this

validation

themselves.

The

problem

cuts

across

the

law

firm

equivalent

of

class

lines.

It’s

not

just

overwhelmed

solo

practitioners

or

overeager

mid-tier

firms

looking

to

punch

above

their

weight.

Every

firm

will

deal

with

this

soon

enough.

They

can

either

accept

that

using

AI

on

the

front

end

doesn’t

alleviate

the

back

end

labor

or

they

can

accept

writing

letters

to

judges

after

the

fact.

One

last

thing.

The

errors

in

the

Chissick

Declaration

include

Bluebook-style

corrections

to

citations

of

Amnesty

International

and

UN

human

rights

reports

about

forced

labor

and

trafficking

in

Cambodia.

Those

aren’t

AI

hallucinations,

but

the

simple

human

mistakes

that

arise

from

embracing

the

unhinged

rules

memorialized

in

the

Bluebook.

But

they

are

a

reminder

of

what

this

case

is

actually

about:

people

held

behind

barbed

wire

and

forced

to

run

pig-butchering

scams.

The

JPLs

are

trying

to

trace

billions

of

dollars

in

crypto

to

make

victims

whole.

They

need

a

recognition

order

to

do

it.

And

they

lost

a

couple

of

weeks

of

runway

because

S&C

didn’t

check

its

work.

(Check

out

the

full

letter

on

the

next

page…)

Earlier:

Has

AI

Managed

To

Make

Lawyers

Even

Dumber?

Biglaw

Firm

‘Profoundly

Embarrassed’

After

Submitting

Court

Filing

Riddled

With

AI

Hallucinations

Am

Law

100

Firm

Accused

Of

Filing

Brief

Riddled

With

AI

Hallucinations…

AGAIN!

Prosecutorial

Error:

‘AI

Hallucinations’

Are

Popping

Up

In

Criminal

Cases

New

Tool

Catches

AI

Hallucinations

In

Legal

Briefs

Understanding

AI

Hallucinations:

Making

Sure

You

Don’t

End

Up

At

The

Wrong

Stop

Legal

AI

Might

Be

Accurate…

And

Still

Not

Right

Joe

Joe

Patrice is

a

senior

editor

at

Above

the

Law

and

co-host

of

Thinking

Like

A

Lawyer.

Feel

free

to email

any

tips,

questions,

or

comments.

Follow

him

on Twitter or

Bluesky

if

you’re

interested

in

law,

politics,

and

a

healthy

dose

of

college

sports

news.

Joe

also

serves

as

a

Managing

Director

at

RPN

Executive

Search.