The

legal

technology

company

Altorney

today

announced

the

general

availability

of

MARC,

a

generative

AI-powered

document

review

system

designed

to

automate

first-pass

review

decisions

before

documents

enter

traditional

review

platforms.

After

first

announcing

MARC

last

March

and

going

through

a

pilot

period

with

corporate

legal

departments,

the

company

is

now

releasing

the

product

for

general

availability

to

corporate

legal

teams,

litigation

service

providers

and

law

firms.

The

Problem

MARC

Addresses

The

product

tackles

a

core

inefficiency

in

e-discovery

workflows:

organizations

typically

load

entire

document

sets

into

expensive

review

platforms,

only

to

cull

large

portions

as

non-responsive.

Shimmy

Messing,

Altorney’s

CEO

and

co-founder,

says

this

approach

creates

unnecessary

costs

and

security

risks.

“If

you’re

loading

your

million

documents

into

a

review

platform,

as

an

example,

and

then

immediately

culling

out

800,000

of

them

for

not

hitting

keywords

or

not

being

part

of

TAR

or

whatever,

you

still

have

these

800,000

documents

sitting

there

in

your

database

that

you’re

paying

for

and

that

are

exposed

from

a

risk

factor

after

leaving

your

corporate

environment,”

Messing

said

during

a

demonstration

of

the

product

for

LawSites.

MARC’s

approach

is

to

automate

the

culling

and

initial

review

decisions

before

documents

reach

the

review

platform,

ideally

within

the

organization’s

own

environment.

This

means

only

relevant

documents

–

already

tagged

with

first-pass

decisions

on

issues

like

privilege,

confidentiality

and

responsiveness

–

are

loaded

into

expensive

hosting

platforms.

How

MARC

Works

MARC

operates

as

a

text

analytics

tool

that

sits

between

data

collection

and

the

review

platform.

The

system

is

agnostic

about

which

large

language

model

(LLM)

it

uses.

Organizations

can

deploy

MARC

with

Altorney’s

provided

Llama

model

installed

locally,

or

integrate

it

with

their

preferred

approved

models,

including

those

from

Azure

or

OpenAI.

MARC

can

operate

entirely

within

an

organization’s

firewall,

with

no

data

transmitted

externally.

“All

the

data

can

stay

there,”

Messing

said.

“Nothing

has

to

go

out

to

OpenAI

or

Azure

AI

–

it

can

all

be

contained

in

a

local

environment.”

This

approach

provides

security

while

also

reducing

costs,

as

local

LLMs

avoid

the

per-token

charges

associated

with

cloud-based

AI

services.

Rachi

Messing,

Altorney’s

co-founder,

said

that

installation

typically

requires

just

30-40

minutes

of

IT

time,

after

which

the

system

is

largely

self-managing.

Protocol

Analysis,

Not

Prompt

Engineering

Among

MARC’s

distinguishing

features

is

its

deliberate

avoidance

of

requiring

prompt

engineering

by

users.

Rather

than

requiring

users

to

craft

precise

prompts

–

a

skill

Rachi

Messing

described

as

“really

hard

to

master”

and

prone

to

inconsistency

–

MARC

uses

what

it

calls

a

“protocol

analysis”

approach.

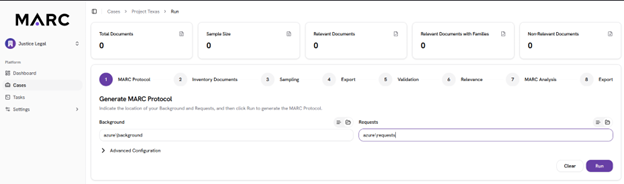

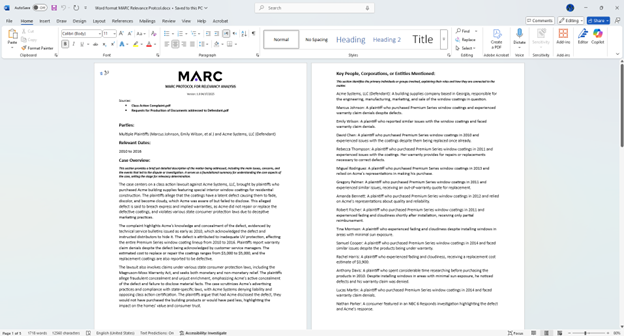

Creating

the

MARC

relevancy

protocol

from

the

background

materials.

With

this

approach,

users

upload

background

materials

about

a

case

into

a

folder.

These

materials

might

include

complaints,

subpoenas,

counterclaims,

pleadings,

or

even

informal

documents

like

an

email

from

in-house

counsel

outlining

a

new

matter

or

an

HR

complaint

in

an

internal

investigation.

MARC

then

generates

a

comprehensive

protocol

document

in

Microsoft

Word

format.

This

protocol

includes:

-

Identification

of

all

parties

involved.

-

Relevant

date

ranges.

-

An

overview

of

the

matter.

-

Key

individuals

and

their

roles.

-

Relevant

technologies

and

products.

-

Different

themes

of

the

case.

-

Specific

issues

to

identify

within

the

dataset.

Attorneys

can

then

edit

this

Word

document

directly,

adding

missing

individuals,

removing

irrelevant

parties,

narrowing

overly

broad

themes,

or

adjusting

other

parameters.

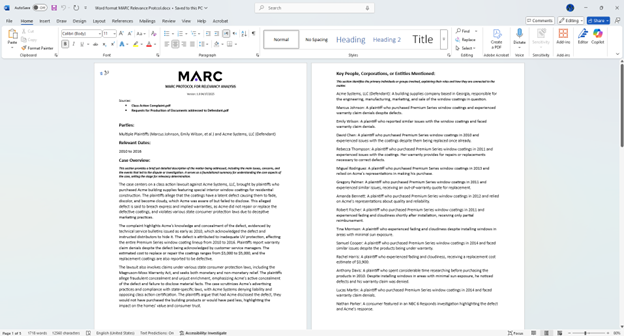

Example

of

the

protocol

created

by

MARC,

which

the

attorney

can

edit

and

resubmit.

The

edited

protocol

is

uploaded

back

into

MARC,

which

then

uses

it

as

the

foundation

for

all

subsequent

analysis.

This

approach

keeps

the

workflow

in

familiar

territory

for

legal

professionals,

Rachi

Messing

said.

“There’s

no

reason

we

need

attorneys

to

become

prompt

engineers,

but

they

love

editing

Word

docs.”

Processing

and

Validation

Once

the

protocol

is

finalized,

MARC

can

ingest

data

from

multiple

sources:

text

files

on

a

file

system,

Microsoft

Purview

exports

from

M365,

or

directly

from

Relativity

databases.

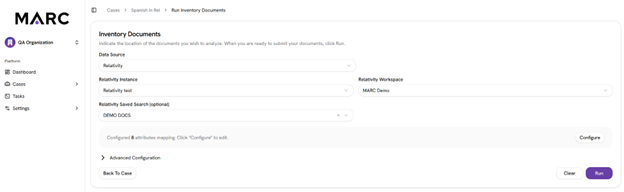

The

system

includes

an

integration

that

allows

users

to

point

MARC

at

specific

saved

searches

within

Relativity

without

actually

moving

the

data.

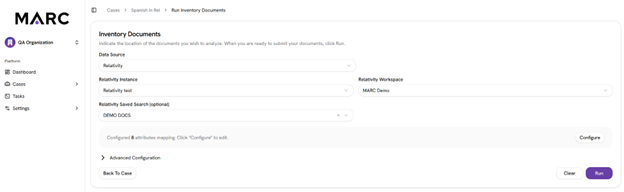

Integration

with

Relativity

to

analyze

docs

based

on

saved

search.

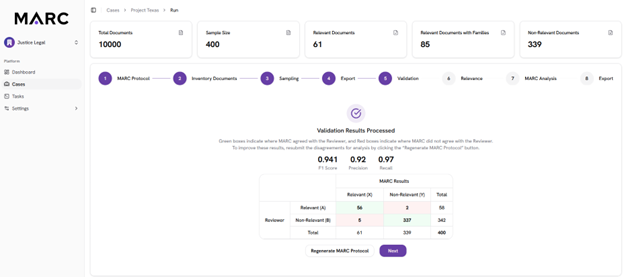

MARC’s

results

can

be

verified

through

a

sampling

and

validation

workflow.

The

system

automatically

determines

the

statistically

valid

sample

size

needed,

analyzes

those

documents

according

to

the

protocol,

and

tags

them

as

relevant

or

not

relevant

at

a

low

per-document

cost.

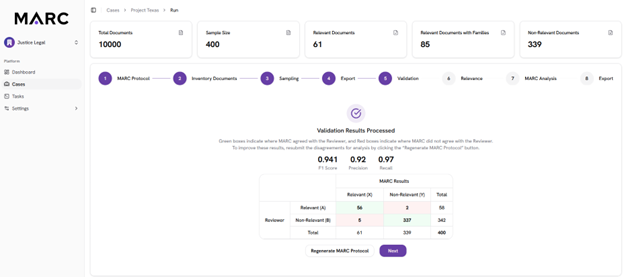

Statistical

sampling

and

validation.

These

sampled

documents

can

be

pushed

to

Relativity

or

exported

via

load

file

for

attorney

review.

Once

attorneys

validate

the

sample,

their

decisions

are

compared

against

MARC’s

determinations.

If

discrepancies

exist,

the

system

can

regenerate

the

protocol,

analyzing

what

needs

to

change

to

correctly

classify

the

disputed

documents

without

affecting

already

correct

decisions.

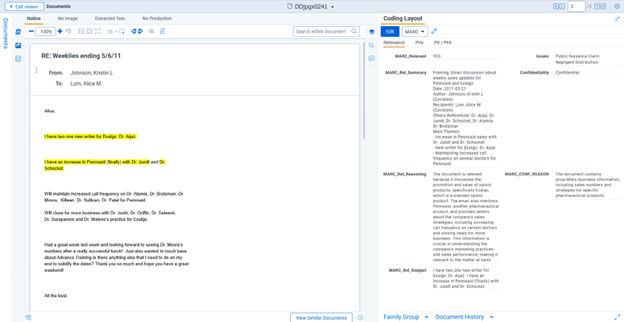

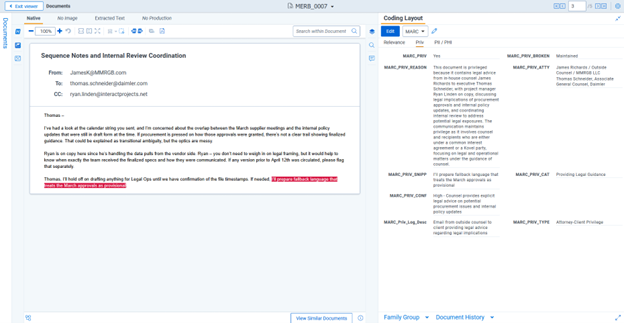

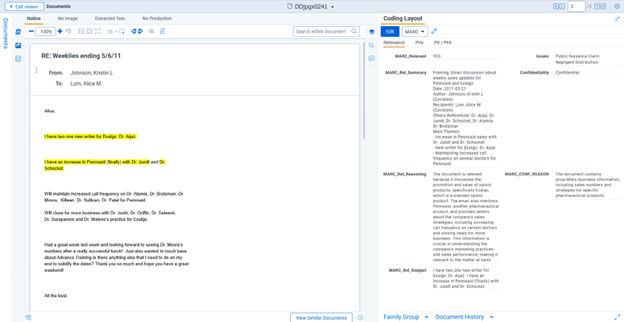

Viewing

a

relevant

result

in

Relativity.

This

iterative

process

continues

until

the

legal

team

is

satisfied

with

MARC’s

performance.

Then

the

full

dataset

is

processed,

at

a

rate

of

over

one

million

documents

per

24

hours.

Deep

Analysis

Capabilities

Beyond

simple

relevance

determinations,

MARC

can

perform

multiple

types

of

analysis

in

a

single

pass,

all

included

in

a

single

additional

cost.

These

analyses

include:

Privilege

Review:

MARC

analyzes

documents

for

attorney-client

privilege

and

work

product

protection,

providing

reasoning

for

each

determination,

identifying

parties

involved,

noting

whether

privilege

was

potentially

waived

by

third-party

involvement,

assigning

confidence

levels,

and

automatically

generating

privilege

descriptions

suitable

for

privilege

logs.

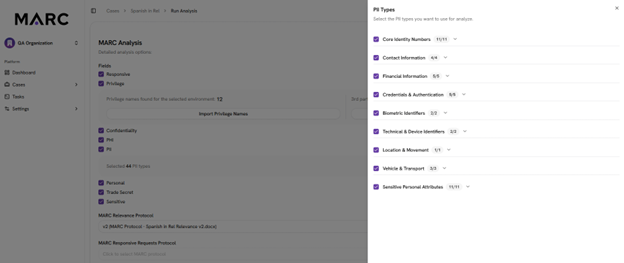

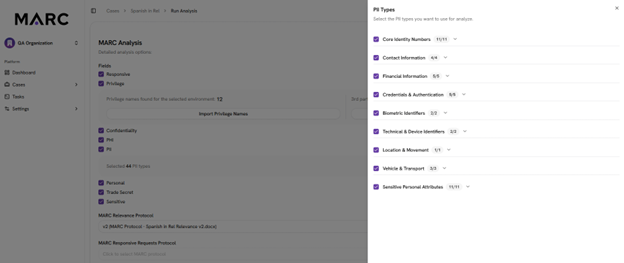

PII

analysis.

PII

and

PHI

Detection:

The

system

identifies

personally

identifiable

information

and

protected

health

information

with

granular

control

over

what

types

to

flag.

Users

can

specify,

for

example,

that

they

only

want

to

identify

financial

information

and

health

information

while

ignoring

personal

email

addresses

or

phone

numbers.

MARC

performs

entity

analysis,

associating

information

across

a

document

even

when,

for

instance,

a

person’s

name

appears

on

page

two

and

their

Social

Security

number

on

page

seven.

Issue

Coding:

The

system

can

tag

documents

according

to

case-specific

issues

defined

in

the

protocol.

Confidentiality

Analysis:

MARC

evaluates

documents

for

confidentiality

designations,

including

trade

secrets

and

other

sensitive

business

information.

Hot

Document

Identification:

The

system

can

flag

potentially

significant

documents

requiring

priority

review.

Foreign

Language

Processing:

MARC

automatically

translates

and

summarizes

documents

in

foreign

languages,

allowing

English-language

protocols

to

analyze

non-English

documents

and

providing

summaries

in

English

for

reviewers.

Output

and

Transparency

For

every

document

it

processes,

MARC

provides

not

just

a

decision

but

also

its

reasoning.

In

the

demonstration,

one

example

showed

MARC

tagging

a

document

as

not

relevant.

Its

explanation

detailed

that,

although

the

document

mentioned

UV

protection

technology,

which

could

potentially

make

it

relevant,

it

concerned

exterior

paint

rather

than

interior

window

coatings,

making

it

irrelevant

to

the

specific

case.

This

transparency

serves

multiple

purposes.

It

allows

legal

teams

to

understand

and

validate

the

AI’s

decision-making

process,

provides

documentation

for

defensibility,

and

helps

identify

where

the

system

might

need

refinement

through

protocol

adjustments.

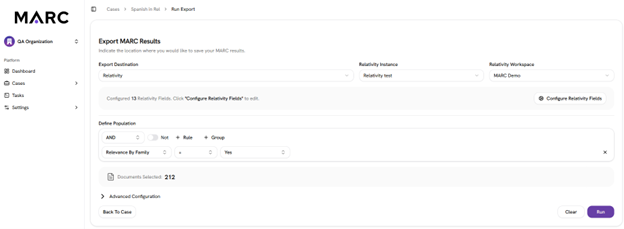

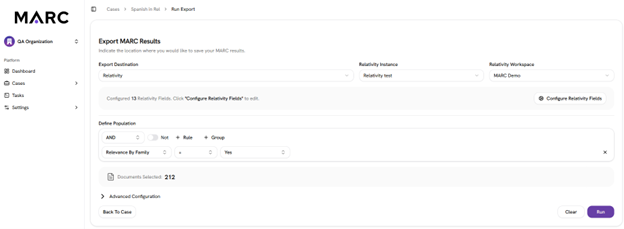

Export

using

Relativity

integration.

Documents

are

also

enriched

with

summaries

and,

for

relevant

documents,

snippets

highlighting

the

most

pertinent

portions.

All

this

information

can

be

exported

or

integrated

directly

back

into

Relativity.

Cost

Savings

and

Predictability

Altorney

says

that

in

the

pilot

program

testing

of

MARC,

users

saw

significant

efficiency

gains.

The

company

highlighted

one

Fortune

500

company

case

involving

more

than

200,000

documents

where

MARC

achieved

62%

review

cost

savings

and

78%

hosting

cost

savings.

The

company

claims

an

80%

reduction

in

documents

transferred

to

hosted

review

platforms

and

an

86%

reduction

in

cycle

time

compared

to

traditional

review.

Its

costs

are

also

predictable

with

a

high

degree

of

precision,

the

company

says.

In

one

proof-of-concept

with

30,000

documents,

Altorney

provided

the

customer

with

a

budget

estimate

of

$2,500.

The

actual

cost

came

in

at

$2,506

–

a

level

of

budget

predictability

the

customer’s

AI

team

said

they

had

never

before

had

with

an

AI-based

product.

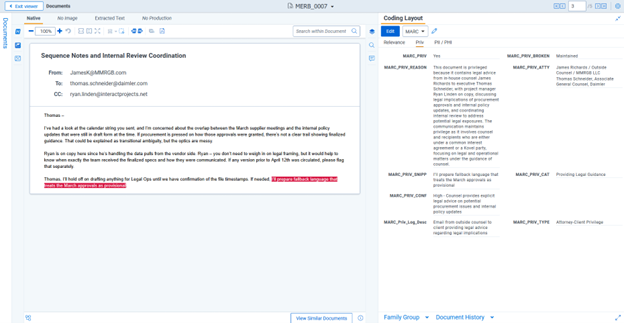

Viewing

a

privilege

result

in

Relativity.

Rachi

Messing

emphasized

that

beyond

cost

savings,

the

technology

addresses

human

inconsistency

in

review.

“You

give

the

same

document

to

four

different

attorneys

and

you’ll

come

out

with

four

different

decisions.”

In

tests

comparing

MARC’s

decisions

to

completed

human

reviews,

customers

found

that

discrepancies

often

revealed

human

reviewers

had

been

either

over

broad

or

over

narrow,

allowing

them

to

tune

MARC

to

find

what

they

actually

needed.

An

Expanding

Market

When

Altorney

initially

launched

MARC

in

March,

it

focused

exclusively

on

corporate

legal

departments

for

behind-the-firewall

deployment.

The

reasoning

for

that

limited

focus

was

both

technical

and

strategic.

The

company

believed

that

culling

should

happen

within

the

corporate

environment

before

data

leaves

for

external

review

platforms,

reducing

both

costs

and

security

risks.

However,

the

market

quickly

pushed

the

company

to

expand

its

approach.

Some

corporate

customers

expressed

strong

interest

in

using

the

product

but

indicated

that

internal

security

and

IT

approval

processes

could

take

up

to

two

years.

These

customers

asked

to

host

MARC

at

their

preferred

litigation

service

providers,

which

would

enable

them

to

accelerate

deployment

while

still

achieving

cost

savings

from

reduced

data

volumes.

Once

the

LSPs

were

on

board

and

began

using

the

product,

they

wanted

to

also

be

able

to

use

it

with

their

law

firm

customers.

That

led

Altorney

to

open

the

platform

to

law

firms.

“We’ve

now

opened

it

up

and

a

lot

of

LSPs

and

law

firms

are

hopping

on

board

and

have

it

installed

in

their

environments

as

well,”

Shimmy

Messing

said.

Pricing

Model

MARC

uses

volume-based

pricing

with

two

tiers.

The

initial

relevance

determination

costs

just

pennies

per

document

or

less.

Additional

analysis

–

including

privilege,

confidentiality,

issue

coding,

PII,

PHI

and

other

determinations

–

is

also

priced

at

a

single

per-document

rate

of

just

a

few

cents,

depending

on

volume.

Notably,

organizations

can

rerun

analyses

without

additional

charges

if

requirements

change,

such

as

modifications

to

a

confidentiality

order.

Humans

in

the

Loop

Despite

the

automation,

Altorney

emphasizes

that

MARC

is

designed

to

keep

humans

involved

in

the

review

process.

“GenAI

doesn’t

eliminate

the

need

for

human

oversight

–

but

it

enables

the

right

human

to

be

in

the

right

place

at

the

right

time

to

optimize

their

value,”

said

Stephen

Goldstein,

the

company’s

chief

product

officer.

Rather

than

replacing

human

reviewers

entirely,

Altorney’s

vision

for

MARC

is

to

transform

first-pass

review

into

quality

control

review,

allowing

reviewers

to

then

work

two

to

three

times

faster

on

a

smaller

set

of

more

important

documents.

Shimmy

Messing

acknowledged

that

while

some

users

might

eventually

feel

comfortable

producing

documents

straight

from

MARC

without

human

review,

most

currently

prefer

having

“eyes

on

everything,”

using

MARC’s

determinations

to

accelerate

rather

than

replace

human

judgment.

‘The

Ultimate

Truth

Seeker’

Altorney

was

founded

by

brothers

Shimmy

and

Rachi

Messing

in

late

2021.

The

company

initially

focused

on

its

Altorney

platform,

a

marketplace

for

document

reviewers

and

legal

talent,

which

launched

at

Legalweek

in

2022.

MARC

emerged

from

a

collaboration

with

Goldstein,

now

the

chief

product

officer

and

formerly

global

director

of

practice

support

at

Squire

Patton

Boggs.

Last

year,

he

approached

the

Messings

with

work

he’d

been

doing

on

using

gen

AI

for

first-pass

review.

After

evaluating

his

technology,

they

decided

to

productize

it,

spending

the

latter

half

of

2024

and

early

2025

developing

MARC

into

a

commercial

product.

The

product

name

honors

the

founders’

late

father,

Marc

Messing,

an

attorney,

rabbi

and

educator

who

died

of

pancreatic

cancer

in

2021.

Shimmy

Messing

described

him

as

“the

ultimate

truth

seeker,”

making

the

name

appropriate

for

a

tool

designed

to

find

truth

in

document

sets.

Both

founders

have

extensive

backgrounds

in

the

e-discovery

industry,

having

both

started

their

careers

at

Merrill

Corporation

in

the

early

2000s.

With

MARC

now

generally

available,

Shimmy

Messing

told

me,

Altorney

positions

itself

as

a

“boutique

coding

shop”

creating

“elegant,

unconventional

legal

software”

that

addresses

persistent

pain

points

in

legal

work

–

first

with

legal

talent

sourcing

through

its

Altorney

platform,

and

now

with

AI-powered

document

review

through

MARC.