A

few

weeks

ago,

Gordon

Rees

found

itself

on

the

wrong

end

of

a

court

filing

flagging

a

number

of

misleading

if

not

completely

wrong

citations

that

looked

an

awful

lot

like

someone

told

ChatGPT

“make

me

legal

filing!”

and

hit

enter.

This

would

be

a

problem

for

any

firm,

but

for

Gordon

Rees,

this

was

at

least

its

third

brush

with

AI

trouble

—

having

previously

admitted

to

filing

a

brief

riddled

with

AI

hallucinations

in

October

and

then

received

a

reprimand

for

filings

with

citations

that

“do

not

support

the

specific

explanatory

phrase”

in

December.

As

we

wrote

about

the

most

recent

accusation,

“Whether

any

specific

citation

was

generated

by

AI

—

indeed,

whether

any

specific

citation

is

even wrong as

opposed

to

merely

debatable

—

opposing

counsel

now

has

every

incentive

to

scrutinize

any

citation

out

of

the

firm

with

a

jeweler’s

loupe.”

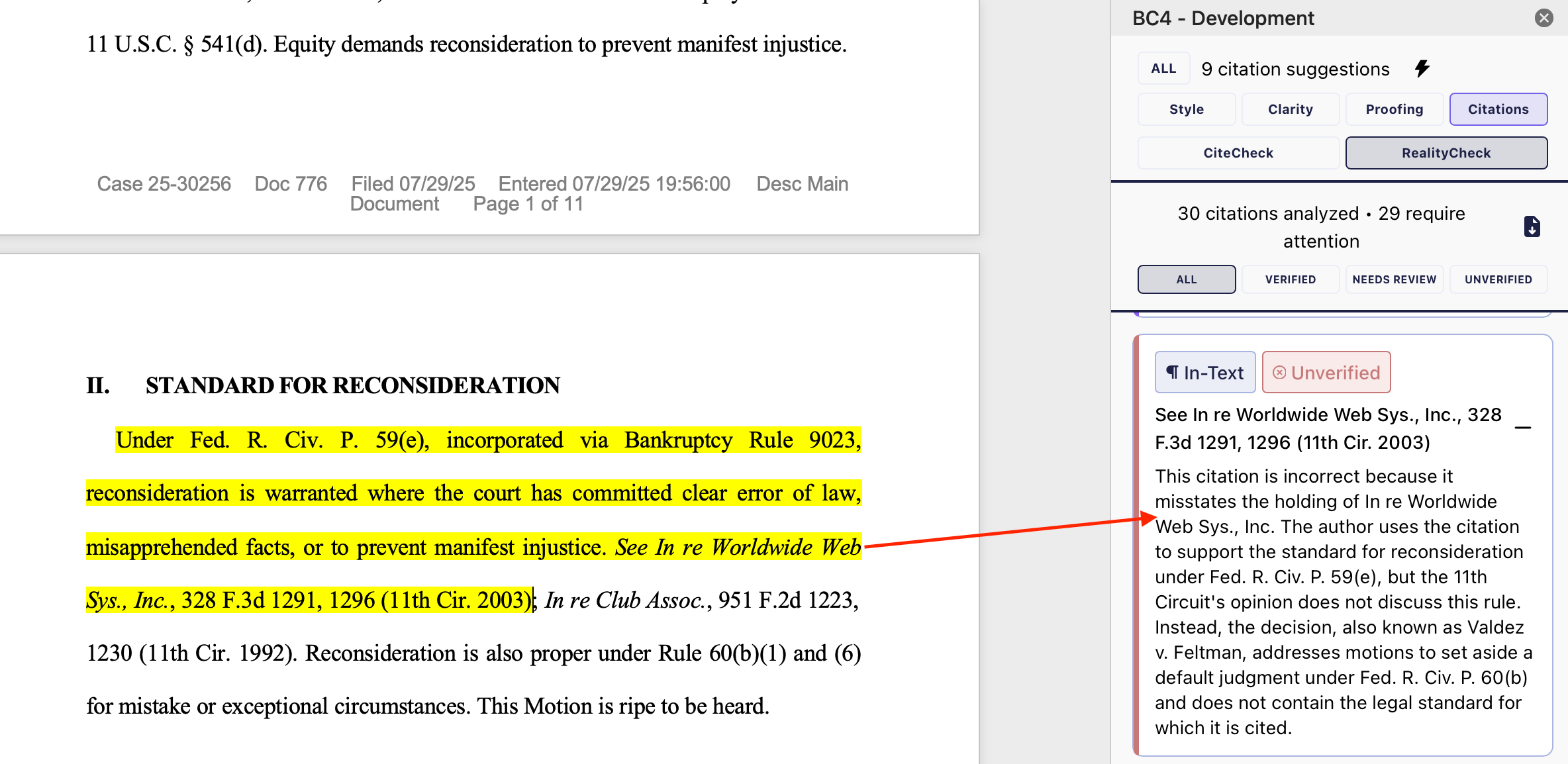

After

that

article,

BriefCatch

founder

Ross

Guberman

offered

me

a

sneak

peek

at

RealityCheck,

the

company’s

new

authority

verification

tool.

Essentially,

the

product

picks

up

where

my

jeweler’s

loupe

analogy

left

off,

providing

a

superpowered

hallucination

check

for

lawyers.

Running

it

against

the

original

brief

from

the

October

Gordon

Rees

story

that

the

firm

already

acknowledged

to

contain

hallucinations,

the

RealityCheck

tool

delivered

exactly

what

you’d

want

as

opposing

counsel.

Or,

ideally,

the

senior

partner

reviewing

your

own

brief

before

signing

your

name

to

a

bunch

of

hallucinatory

nonsense.

At

the

time,

RealityCheck’s

splashy

announcement

launch

remained

a

few

weeks

off,

but

with

its

Legalweek-timed

roll

out,

we

can

now

talk

a

little

about

the

new

essential

tool

for

the

LitigationSlop

era.

Lawyers,

ideally,

painstakingly

review

every

case

in

every

filing.

But,

at

this

point,

you

can’t

even

trust

the

Department

of

Justice

to

check

its

briefs,

let

alone

your

adversaries

(or,

perhaps,

your

first-year

associates).

RealityCheck

isn’t

trying

to

replace

the

process

of

cite

checking,

but

it

is

trying

to

get

it

done

faster

and

with

more

certainty.

The

tool

uses

a

two-layer

verification

process,

combining

deterministic

citation

validation

—

checking

reporter

volumes,

court

identifiers,

and

case

names

against

authoritative

legal

databases

all

without

any

AI

involvement

—

with

AI-assisted

analysis

that

then

evaluates

the

quoted

language

to

make

sure

it

actually

appears

in

the

cited

opinion

and

actually

supports

the

proposition

it’s

cited

for.

Every

citation

is

then

scored

visually

with

a

Green-Verified,

Yellow-Caution,

or

Red-Incorrect

label

and

explained

for

the

reviewer.

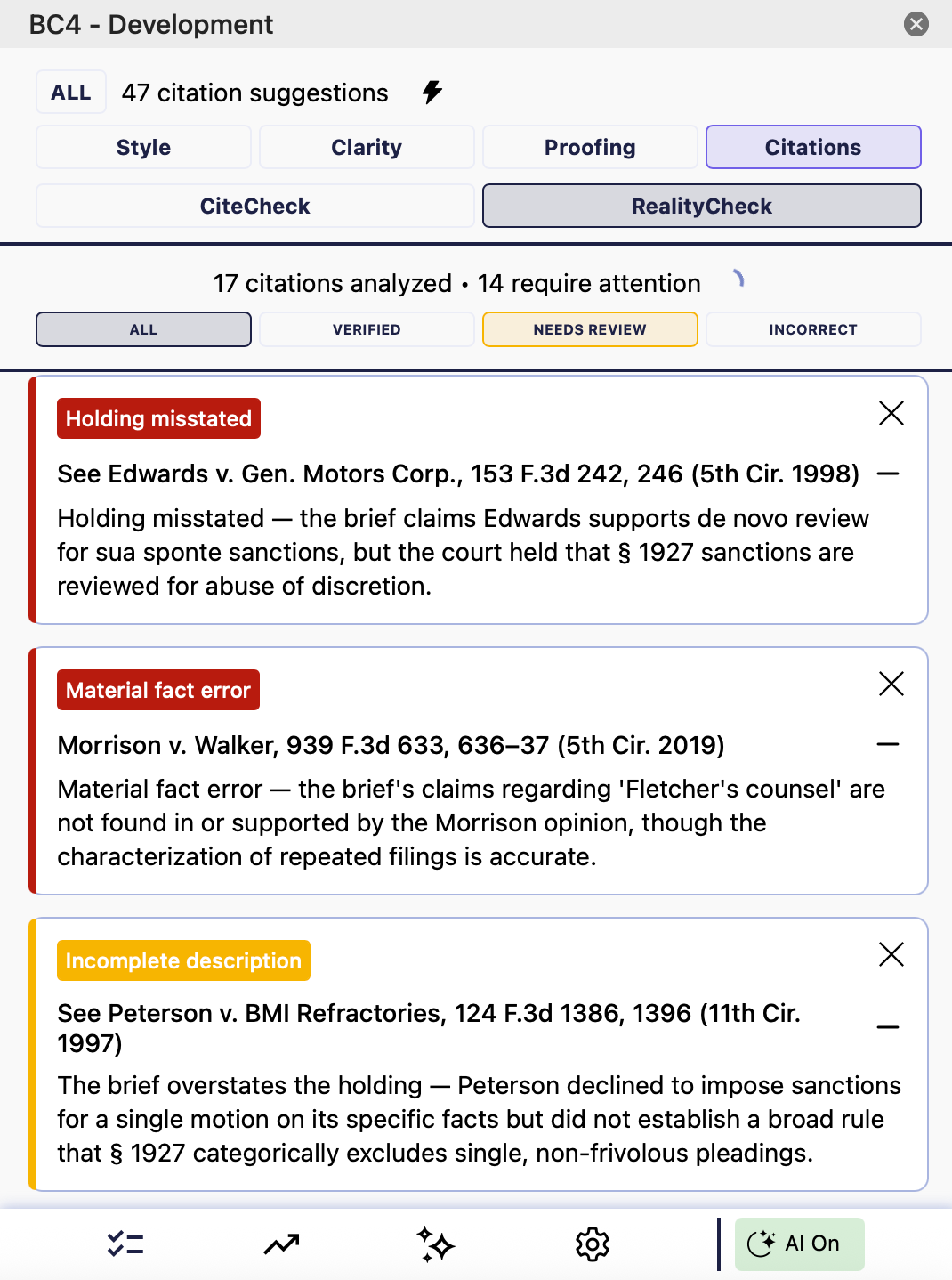

BriefCatch

performed

a

detailed

case

study

on

the

Fifth

Circuit’s

recent

decision

in

Fletcher

v.

Experian

Information

Solutions,

where

the

court

flagged

fabricated

quotations,

misstated

holdings,

and

citations

resolving

to

entirely

different

cases.

This

is

what

the

offending

filing

would

look

like

for

a

RealityCheck

user:

Unfortunately,

this

isn’t

a

problem

that’s

likely

to

go

away.

Researcher

Damien

Charlotin

has

now

catalogued

over

1,000

legal

cases

involving

AI

hallucinations.

Lawyers

have

started

blaming

legal

AI

research

tools

themselves

for

introducing

errors

into

their

briefs

—

which

is

a

bit

like

blaming

a

vending

machine

for

not

giving

you

steakhouse

dinner

—

but

it

speaks

to

the

reality

that

lawyers

increasingly

rely

on

tools

for

accuracy

and

mistakes

follow.

This

is

how

those

get

caught.

BriefCatch

is

making

it

available

to

its

federal

and

state

court

clients.

As

Guberman

put

it:

“Once

courts

are

running

filed

briefs

through

RealityCheck,

the

calculus

changes

for

every

litigator.

The

question

isn’t

whether

to

verify

your

citations.

It’s

whether

you

want

the

court

to

find

the

errors

before

you

do.”

Smart

play

to

put

the

screws

to

the

entire

legal

market

like

that.

Joe

Joe

Patrice is

a

senior

editor

at

Above

the

Law

and

co-host

of

Thinking

Like

A

Lawyer.

Feel

free

to email

any

tips,

questions,

or

comments.

Follow

him

on Twitter or

Bluesky

if

you’re

interested

in

law,

politics,

and

a

healthy

dose

of

college

sports

news.

Joe

also

serves

as

a

Managing

Director

at

RPN

Executive

Search.